This page will introduce the best pre-trained Stable Diffusion models to generate anime images and can be used to create a wide range of anime-style art, including characters, backgrounds, and more.

The list covers Checkpoint Trained models, Checkpoint Merge models, and LoRAs. If you don’t intend to install Stable Diffusion locally, you can also experience these models using Stable Diffusion websites, or the best anime AI art generators.

👍 For more SD models, make sure to also check out our complete list of the best Stable Diffusion models.

Table of Contents

Animagine XL

Animagine XL is a high-resolution and anime-themed model with enhanced hand anatomy, improved concept understanding, and advanced prompt interpretation. It is designed for generating anime-style images based on textual prompts.

Animagine XL ranked top of the list for its ability to generate images that highly resemble iconic characters in various anime titles, and the perfect combination of the character and background.

Like other anime-style Stable Diffusion models, Animagine XL supports Danbooru tags for image generation, allowing users to specify detailed attributes for the images they want to create.

The model is optimized for Danbooru-style tags rather than natural language prompts and is designed to generate more balanced NSFW content.

Download Animagine XL from Civitai

Counterfeit

The Counterfeit model is a series of anime-style Stable Diffusion 1.5 checkpoints designed primarily for generating high-quality anime-style images.

It is designed to generate images with a focus on anime-style art and can be used to create highly detailed and intricate images with cinematic lighting and stunning visual effects.

The Counterfeit-V3.0 model is acclaimed for its versatility and quality, being described as “easily top-tier” with no other model coming close in terms of generating poses, points of view, styles, people, nature, cities, and clothes.

The Counterfeit series includes Counterfeit-V1.0, Counterfeit-V2.0, Counterfeit-V2.5, and the latest, Counterfeit-V3.0, which is highlighted for its effective results and greater expressiveness.

Download Counterfeit from Civitai

MeinaMix

MeinaMix is a checkpoint model for Stable Diffusion that specializes in creating anime-style images. It is one of the most downloaded anime style checkpoints on Civitai. The model is designed to produce good art with minimal prompting.

It also features a hires.fix option, utilizing R-ESRGAN 4x+Anime6b for enhancing the quality of images, especially the faces and eyes of characters that are far away in the scene.

MeinaMix V11 is a widely used version of the model and is often merged with other models to enhance its capabilities. For instance, MeinaMix Flat is a merge of MeinaMix V11 with helloFlatArt v1.2a.

The recommended parameters for using MeinaMix include a sampler of DPM++ SDE Karras with 20 to 30 steps, a CFG Scale of 4 to 11, and resolutions of 512×768, 512×1024 for portrait images, and 768×512, 1024×512, 1536×512 for landscape images.

Download MeinaMix from Civitai

Cetus-Mix

Cetus-Mix is designed to generate anime art, and it is known for its exceptional 2D anime base model capabilities, offering improvements over previous models like the Andromeda-Mix.

Its capabilities have been praised for its attention to shades and backgrounds, as well as its hands-fix and highres-fix features.

It is suitable for various applications, such as anime scenery concept art and detailed illustrations.

The model recommends using a high-resolution fix (upscaler) to avoid producing blurry images, specifically SwinIR_4x and R-ESRGAN 4x+anime6B.

The model also includes specific recommendations for settings and tools to use for optimal results, such as the Clip skip 2 Sampler, DPM++2M Karras Steps, CFG scale, and Vae: Pastel-Waifu-Diffusion.vae.pt for the VAE (Variational Autoencoder) used.

Download Cetus-Mix from Civitai

国风3 GuoFeng3

The 国风3 GuoFeng3 model is a Chinese gorgeous antique style model and an antique game character model with a 2.5D texture, it can be used for generating characters in Donghua (Chinese animation) and games.

GuoFeng3.2_Lora is the Lora version of Guofeng 3.2. By integrating Lora based on Noise Offset training, the model can draw more beautiful light and shadow effects.

GuoFeng3.3 is a major update and improvement of Guofeng 3.2. It can adapt to full-body images. Even if the label is not very good, the model will automatically modify the picture, although this may cause the faces produced by the model to be similar.

GuoFeng3.4 is a retrained version that adapts to full-body images. The content is quite different from the previous versions. The overall painting style has been adjusted to reduce the degree of over-fitting, allowing it to use more Lora.

Download GuoFeng3 from Civitai

Pastel-Mix

Pastel-Mix is a stylized latent diffusion model designed to produce high-quality, highly detailed anime-style images with just a few prompts.

It supports danbooru tags for generating images, allowing users to specify details such as character features and settings to tailor the output to their preferences.

The model is noted for its ability to retain or enhance the pastel style, especially when using the recommended Latent upscaler setting. This setting is preferred over others like Lanczos or Anime6B, which may smoothen out the images, removing the distinct pastel-like brushwork.

The settings recommended for optimal use include a sampler of DPM++ 2M Karras, 20 steps, a CFG scale of 7, and specific settings for denoising strength and upscaling to retain the pastel style effectively.

Download Pastel-Mix from Civitai

Anime Pastel Dream

Anime Pastel Dream is a specialized Stable Diffusion Checkpoint model designed to generate anime-style artwork with a distinctive pastel aesthetic.

The creator of Anime Pastel Dream has developed two versions of the model: a “Hard” version, which emphasizes a stronger pastel style and encourages more creative outputs, and a “Soft” version, which offers a subtler pastel style that is easier to control.

For optimal results, the creator suggests specific settings for using the model. These include skipping 2 CLIPs, an optional ENSD setting of 31337, not restoring faces, and applying a high-resolution fix with either a general upscaler and low denoise or a latent upscaler with high denoise. Users are advised to use Autoas vae for baked vae versions of the model and a good vae for the no vae versions.

The model is popular and available on various Stable-Diffusion-based websites like Leonardo.ai.

Download Anime Pastel Dream from Civitai

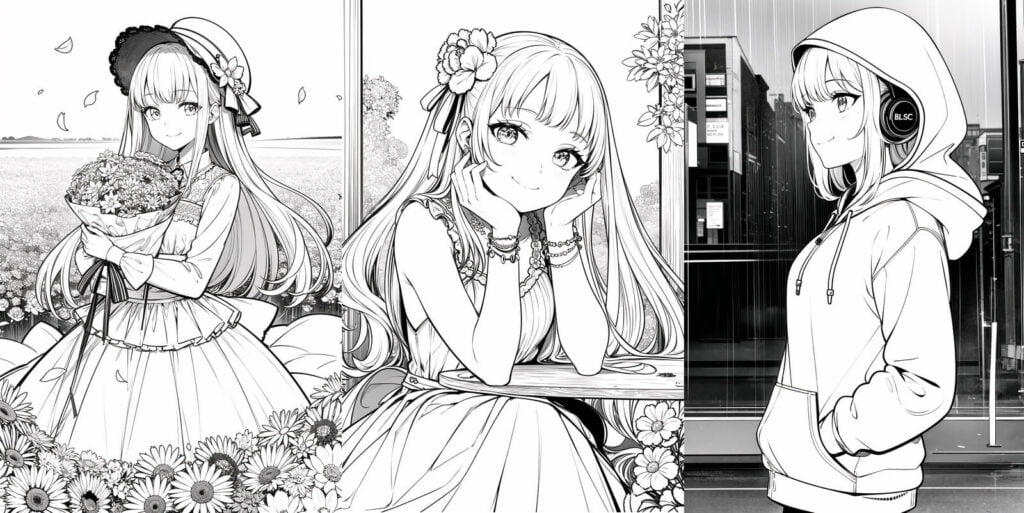

Anime Lineart / Manga-like

The Anime Lineart / Manga-like model helps generate artwork that mimics the style of anime and manga lineart, such as clean lines, dynamic poses, and expressive characters.

This model improves the generation of characters with complex body limbs and backgrounds, moving the overall styling towards a manga style rather than simple line art.

The output images resemble the distinctive line work and stylistic elements found in anime and manga illustrations.

By lowering the CFG (configuration) scale, the lines can be made thinner, resulting in a more refined and detailed appearance.

The model is trained with a variety of base models, including Anything V4.5 and Orange Mix VAE, and uses DPM++ 2M Karras as the sampler.

It is recommended to use a high-resolution fix and adjust the weight of the LoRA or the prompt of “monochrome” for optimal results.

Download Anime Lineart / Manga-like from Civitai

DreamShaper

The DreamShaper V8 model is a specialized AI model that excels in generating anime-style images, among other types of content. It is derived from the powerful Stable Diffusion (SD 1.5) model and has been fine-tuned to produce high-quality images that are particularly relevant to anime and character generation.

DreamShaper 8 LCM is highlighted for its use in Stable Diffusion Animation, empowering users to create anime animations. This version is particularly optimized for flicker-free animation workflows and is capable of producing optimized animation results.

The model has been optimized to capture the distinct visual characteristics of anime, such as the stylized eyes and facial features that are typical of the genre.

Since version 7, DreamShaper has been upgraded to improve realism without sacrificing anime and art quality, as well as enhancing NSFW and character LoRA compatibility.

Download DreamShaper from Civitai

AniVerse

AniVerse by Samael1976 is trained on a large dataset of images, with over 890,000 training steps, and the model is available in different versions, including V1.2, V1.3, V1.5 and V2.0.

The anime-style images generated by AniVerse V2 model are characterized by their vibrant colors, detailed character designs, and fluid motion sequences.

The images generated by AniVerse V2 are not limited to the traditional anime style, but can also include elements of semi-realistic, cartoonish, or more realistic styles.

Images generated by AniVerse are highly detailed and realistic, with an emphasis on intricate shading and lighting to create a three-dimensional effect; they resemble the style often seen in modern comic books or graphic novels.

Download AniVerse from Civitai

FAQs

What are Stable Diffusion Anime Models?

Stable diffusion anime models are AI models trained to generate anime-style images. They are based on stable diffusion datasets, which provide the AI with the skills to create anime characters, backgrounds, and more.

How are Stable Diffusion Anime Models Different from Other AI Image Generators?

Stable Diffusion Anime Models offer greater control and customization compared to traditional AI image generators. Users can choose a model they like and load it on the image generator, allowing for more versatile and tailored content creation.

What are Some Popular Stable Diffusion Anime Models?

There are several popular Stable Diffusion Anime Models available, including Anything v3, Anything v5, DiscoMix Anime, OrangeMixs, Counterfeit, DreamShaper, Kenshi, Arcane Diffusion, AbyssOrangeMix3, MeinaMix, Cetus-Mix, CuteYukiMix, and Mistoon_Anime.

How Can Stable Diffusion Anime Models be Used?

Stable Diffusion Anime Models can be used for various purposes, such as creating fan art, original characters, books, comics, webtoons, and more. They are popular among anime and manga enthusiasts for enhancing content generation and providing entertainment on social media.

What are the Best Practices for Writing Stable Diffusion Anime Prompts?

Effective Stable Diffusion Anime Prompts require a blend of clarity, imagination, and an understanding of how generative models interpret and execute instructions. Best practices include being specific with character attributes, setting, mood, and color schemes, balancing creativity with clarity, using descriptive language, and understanding the model’s language.

What are Some Common Mistakes to Avoid When Using Stable Diffusion Anime Models?

Common mistakes include poor prompting, not understanding denoising strength, not giving the AI enough time, copying settings without understanding them, not iterating enough, and not understanding the resolution settings.

How Can Stable Diffusion Anime Models be Accessed and Used?

Stable Diffusion Anime Models can be accessed through platforms like CivitAI and HuggingFace.co. Users can download and load these models on image generators to create anime-style art based on their prompts.